Research Workflow Automation System

- Python

- Automation

- LLM

- Research Workflow

Overview

Academic research involves repetitive, time-consuming tasks: data cleaning, literature searches, statistical analysis, figure generation, and writing. This system automates the entire research pipeline—from data to final PDF—with a single prompt and zero human intervention.

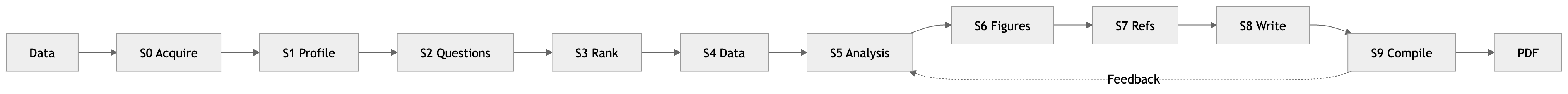

The workflow runs 9 sequential stages with 60+ minute execution time, handles interruptions with resumable execution, manages token overflow across stages, and validates outputs using Python scripts rather than LLM self-verification.

System Architecture

Orchestrator + Skills Pattern

The system uses a master orchestrator that coordinates all stages, reads progress tracking for resume capability, and handles errors and feedback loops. Each stage is implemented as a separate skill with self-contained instructions.

Linear Workflow with Feedback Loop:

Key Components

| Component | Description | Location |

|---|---|---|

| Orchestrator | Master coordinator running stages in sequence | workflow/skills/orchestrator/SKILL.md |

| Skills | Individual pipeline stages with instructions | workflow/skills/<stage>/SKILL.md |

| Shared Scripts | Reusable utilities for progress, context, feedback | workflow/scripts/*.py |

| State Files | JSON files tracking progress, context, decisions | exam_paper/*.json |

Model Tiering Strategy

Different pipeline stages require different reasoning capabilities. The orchestrator uses a three-tier model system to balance cost and quality:

| Model Level | Model | Used For | Stages |

|---|---|---|---|

| high | opus[1m] | Deep reasoning, complex synthesis | Research Questions (2), Write Paper (8) |

| medium | sonnet | Data inspection, code generation | Load & Profile (1), Score & Rank (3), Analysis (5), Figures (6), Lit Review (7) |

| low | haiku | Simple downloads, mechanical tasks | Acquire Data (0, 4), Compile & Review (9) |

Key Innovations

Python-Based Validation

LLMs hallucinate when verifying “did this work?” and cannot reliably check file existence. The system uses Python-based file system validation with pre-emptive feasibility checks:

def _validate_outputs(expected_outputs: dict) -> None:

"""Validate that expected output files exist and have content."""

for name, path in expected_outputs.items():

if not os.path.exists(path):

raise ValueError(f"Missing required output: {name} at {path}")

if os.path.getsize(path) == 0:

raise ValueError(f"Empty output file: {name} at {path}")

The _validate_outputs() function checks file existence and size directly via the OS, raising ValueError if expected outputs are missing. complete_stage() calls this validation before marking a stage complete.

Token Management: Context Bundles + Pruning

Problem: 9 stages × large JSON files = token overflow. Each stage needs all previous context.

Solution: Two-part system. Context bundles capture semantic decisions (why) rather than raw outputs (what). Each stage adds a compressed layer with:

key_decisions- What was decided and whyforward_references- Pointers to preserved filesstage_summary- Stage-specific output summary

Selective pruning rules specify:

can_prune- Files deletable after each stagemust_preserve- Files required for downstream stagessummary_in_context- What summaries remain in context

Pruning modes: safe (after checkpoint stages), aggressive (after every eligible stage), off (debugging). Result: ~80% token reduction while maintaining full resumability.

Feedback Loop State Management

When analysis fails, the system re-runs stages 3-5 while preserving state. cycle_state.json tracks feedback loop iterations with:

current_cycle- Current iteration numbermax_cycles- Maximum allowed iterationsfailed_candidates- Variables that failed analysisfailure_reasons- Why each candidate failed

The reset_stage_progress() function deletes progress.json to enable re-entry. Fast-track mode skips web searches (unchanged), runs primary model + Table 1 only, and applies score penalties to failed candidates. Stages 3-5 files are never pruned during active feedback cycles.

Resources

- GitHub Repository: https://github.com/DamarisDeng/paper-writing-system

workflow/scripts/progress_utils.py- Progress tracking implementationworkflow/scripts/context_manager.py- Context bundle and pruning systemworkflow/scripts/feedback_utils.py- Feedback loop managementworkflow/scripts/feasibility_validator.py- Pre-emptive validation